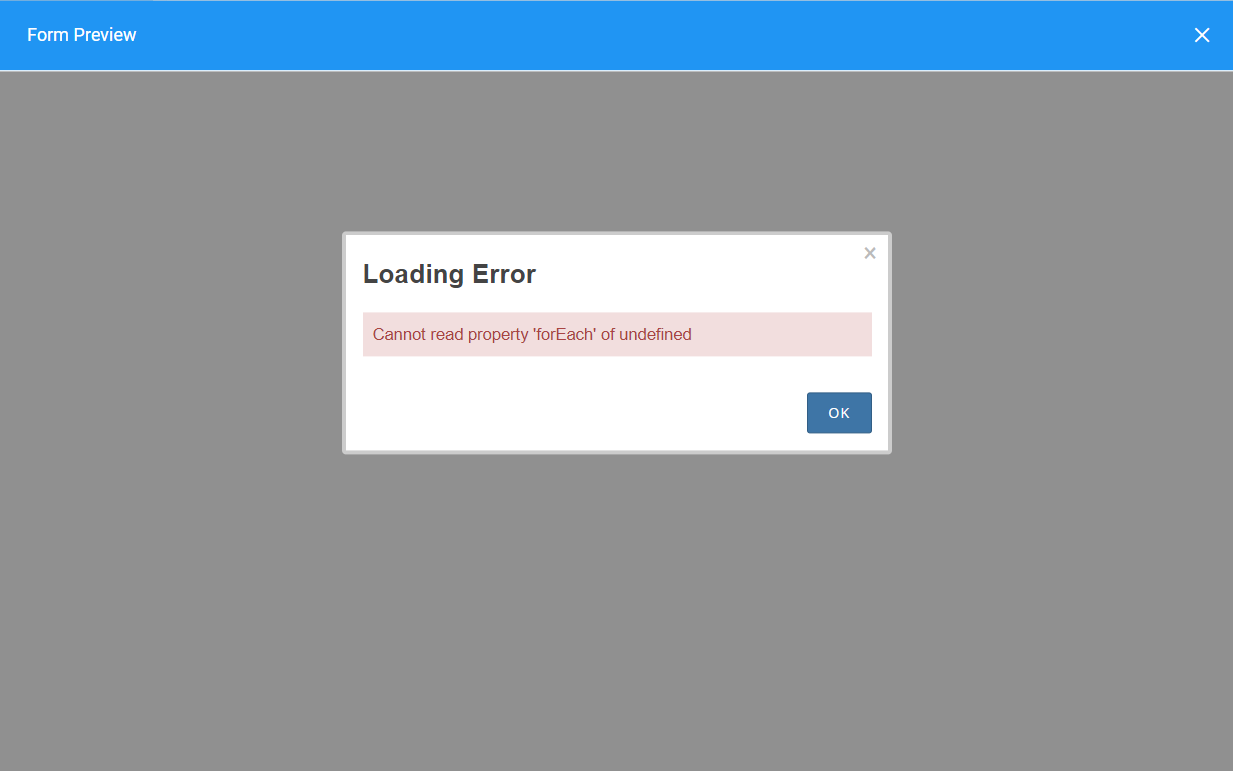

Error while loading nview engine5/15/2023

Users will be experiencing billing errors when trying to access these pages. Known dashboards include Google Compute Engine, Stackdriver, Google Kubernetes Engine, Google Cloud Storage, and Firebase. The Google Cloud Console is experiencing errors when trying to access some dashboards within. We will provide an update by Thursday, 10:30 US/Pacific with current details. For everyone who is affected, we apologize for the disruption. An updated list of product dashboards that are affected is as follows Google Compute Engine, Stackdriver, Google Kubernetes Engine, Google Cloud Storage, Firebase, Billing, App Engine, APIs, IAM, Cloud SQL, Dataflow, and Big Query. We are experiencing an issue with Google Cloud Console where users are experiencing billing errors when trying to access products' dashboards beginning at 07:12 US/Pacific. We will provide a more detailed analysis of this incident once we have completed our internal investigation. We will conduct an internal investigation of this issue and make appropriate improvements to our systems to help prevent or minimize future recurrence. The issue with Google Cloud Console has been resolved for all affected projects as of Thursday, 8:58 US/Pacific.

Finally, we are reviewing dependencies in the serving path for all pages in the Cloud Console to ensure that necessary internal requests are handled gracefully in the event of failure. Additionally, we will improve monitoring for the internal billing service to more precisely identify which part of the system is running into limits.

The load shedding response of the billing service will be improved to better handle sudden spikes in load and to allow for quicker recovery should it be needed. We will implement improved caching strategies in the Cloud Console to reduce unnecessary load and reliance on the internal billing service. In order to reduce the chance of recurrence we are taking the following actions. Once the traffic source was identified, mitigation was put in place and traffic to the internal billing service began to decrease at 08:40. In parallel, we worked to identify the source of the extraneous traffic and then stop it from reaching the service. First, we increased the resources for the internal billing service in an attempt to handle the additional load. Both teams worked together to investigate the issue and once the root cause was identified, pursued two mitigation strategies. REMEDIATION AND PREVENTIONĬloud Billing engineers were automatically alerted to the issue at 07:15 US/Pacific and Cloud Console engineers were alerted at 07:21. This led to the Cloud Console serving timeout errors to customers when the underlying requests to the billing service failed. The additional load caused time-out and failure of individual requests including those from Google Cloud Console. The internal billing service is one of them, and is required to retrieve accurate state data for projects and accounts.Īt 07:09 US/Pacific, a service unrelated to the Cloud Console began to send a large amount of traffic to the internal billing service. The Google Cloud Console relies on many internal services to properly render individual user interface pages. Affected console sections include Compute Engine, Stackdriver, Kubernetes Engine, Cloud Storage, Firebase, App Engine, APIs, IAM, Cloud SQL, Dataflow, BigQuery and Billing. On Thursday from 07:10 to 09:03 US/Pacific the Google Cloud Console served 40% of all pageviews with a timeout error. We are taking immediate steps to improve the platform’s performance and availability. To all customers affected by this Cloud Console service degradation, we apologize. On Thursday, Google Cloud Console experienced a 40% error rate for all pageviews over a duration of 1 hour and 53 minutes.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed